How an operating system works often goes unnoticed during everyday computer use. A device powers on, a desktop appears, and applications launch within seconds. From the user perspective the machine feels simple, almost effortless to operate.

Behind that smooth experience lies a layered control system. The operating system coordinates hardware components, organizes memory usage, launches applications, and manages communication between devices. Every action on a computer flows through this control layer.

Many people first encounter the topic when asking what is an operating system while learning programming or computer science. The question sounds basic, yet the answer reveals one of the most important systems inside modern computing. Without this central controller, hardware components would operate independently and conflict with one another.

The role of the OS becomes clearer during startup. When power reaches the motherboard, firmware begins initializing hardware devices. Storage controllers activate, memory modules respond, and the processor prepares to execute instructions. Only after these early checks finish does the system begin loading the main software environment.

That process introduces the real mechanics of how an operating system works. A bootloader loads the kernel into memory, allowing the system to start managing hardware resources. The kernel then prepares the environment where applications can run safely and efficiently.

Every step relies on the operating system in computer architecture acting as a coordinator. Hardware devices produce signals and data. Applications request resources and perform tasks. The OS ensures that both sides communicate without conflicts.

Learning this process helps beginners move beyond surface-level computer use. It also gives IT learners a clearer mental model of how systems behave under the hood. Topics like memory allocation, process scheduling, and device control begin to make sense once the operating system’s workflow becomes visible.

The Role of an Operating System

The operating system serves as the central manager of a computer system. Every instruction from applications, hardware devices, and users passes through this layer before reaching the processor or memory.

A concise operating system definition describes it as system software responsible for managing hardware resources and providing services for applications. This description highlights the OS as the coordinator of computing activity rather than a typical application program.

The operating system meaning becomes clearer when observing how hardware components interact. The processor executes instructions, memory stores active data, and storage devices hold long-term information. Each component operates at different speeds and requires coordination.

The OS provides that coordination. It allocates CPU time to running processes, assigns memory to programs, and ensures that hardware devices communicate with software correctly.

Many computer operating systems share the same fundamental responsibilities. They schedule processes so multiple programs appear to run at the same time. They organize storage through file systems, allowing users to create, access, and modify data.

Security and stability also fall under the OS control layer. Permission systems restrict access to sensitive resources, while process isolation prevents a single faulty program from crashing the entire system.

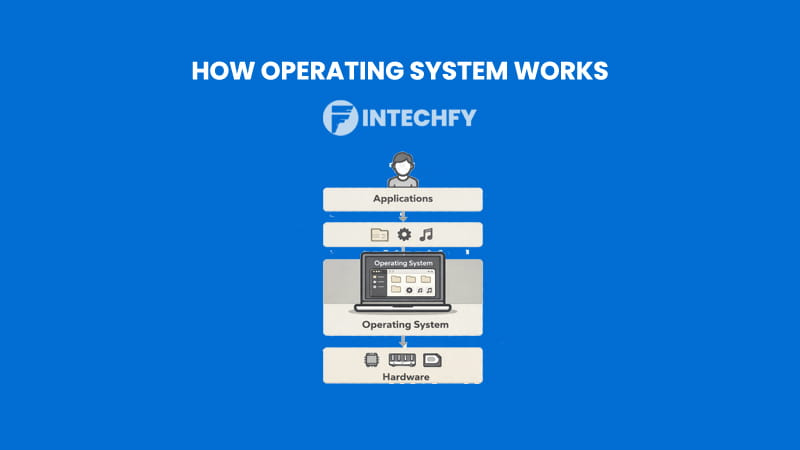

This structure explains why the operating system sits between applications and hardware. Programs interact with system services rather than directly controlling physical components. The OS translates software requests into hardware actions.

These responsibilities form the foundation of system behavior. Once this foundation is clear, the workflow that connects power-on events to running applications becomes easier to examine.

Operating System Workflow Diagram (Big Picture Overview)

A structured operating system diagram helps visualize the sequence that transforms powered hardware into a working computing environment. This workflow moves through several layers, beginning with hardware initialization and ending with user applications running on the system.

Each stage reveals part of how the OS works, showing how control gradually shifts from firmware routines to the operating system kernel and finally to user programs.

High-Level OS Workflow Explained

The process begins when the computer receives power. Hardware components initialize through firmware routines that verify essential devices such as memory, processors, and storage controllers.

Once initialization completes, the boot manager takes control and loads the operating system kernel from storage into memory. This moment marks the transition from firmware control to the operating system itself.

From that point, the kernel begins managing system resources. It prepares memory structures, initializes drivers, and establishes the core environment required for software execution.

A typical diagram of operating system workflow presents these layers in a vertical structure. Hardware forms the base layer. Firmware prepares the system during startup. The kernel introduces resource management, and system services enable applications to run.

This layered design reflects the broader operating system architecture used by modern systems such as Windows and Linux. Each layer isolates responsibilities while maintaining communication with the others.

Applications operate at the highest level of this structure. They interact with system services, which translate requests into instructions the kernel can process. The kernel then communicates with hardware devices.

The structure ensures stability and security. Programs cannot directly control hardware devices. Every request passes through controlled interfaces managed by the OS.

OS Workflow Summary Table

| Stage | What Happens | Main Component |

|---|---|---|

| Power On | Hardware initializes | Firmware |

| Bootloader | Loads kernel | Boot Manager |

| Kernel Start | Core control begins | Kernel |

| User Space | Applications run | OS Services |

This workflow summarizes how the system progresses from powered hardware to a fully interactive environment where applications can operate safely and efficiently.

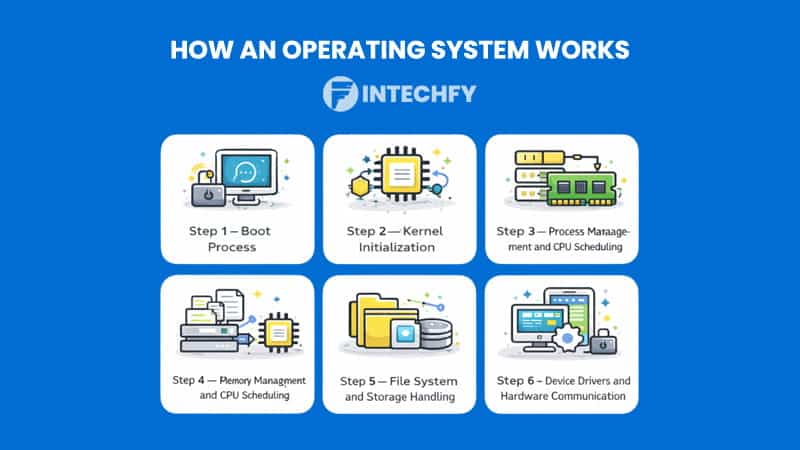

How an Operating System Works — Complete Step-by-Step Process

Modern computers appear responsive the moment a user presses the power button. Applications open quickly, files load, and the system reacts to input almost instantly. Behind that experience lies a structured startup sequence that reveals how an operating system works at a deeper technical level.

A computer cannot immediately launch applications after receiving power. Hardware must initialize, the system kernel must load into memory, and core services must prepare the environment where software will run. Each stage builds on the previous one until the machine reaches a usable state.

These early phases reveal key operating system functions. The OS initializes hardware communication, establishes memory control, and prepares the mechanisms required for process execution and device interaction. This sequence also forms the foundation of os resource management, where the system begins organizing CPU usage, memory allocation, and hardware access.

The sections below examine the first two phases of the startup lifecycle. The boot process activates hardware and loads the system kernel. Kernel initialization then establishes the core environment that allows applications to run.

Step 1 — Boot Process: Where Everything Starts

The boot process marks the first stage of how an operating system works when a computer powers on. At this moment the machine contains inactive hardware components and stored system software, but nothing has begun executing yet.

During this stage the boot process operating system workflow transitions from hardware initialization to loading the system kernel into memory. Every modern system follows a similar structure, whether it runs the windows operating system or relies on the linux boot process used by many servers and development environments.

Two major phases occur during this stage: hardware initialization and operating system loading.

Power On and POST

When a computer receives electrical power, the processor immediately begins executing firmware instructions stored on the motherboard. These instructions belong to system firmware such as BIOS or UEFI.

The first responsibility of this firmware is hardware verification through the Power-On Self-Test. POST checks whether essential components such as RAM, CPU, and storage devices respond correctly.

Memory modules undergo quick validation tests. Storage controllers are identified. Input devices such as keyboards become available for early system commands. If a critical component fails during POST, the firmware halts the startup sequence and signals an error.

This stage is crucial to operating system architecture. The OS cannot function until the hardware environment is stable and ready for software execution. Firmware ensures that the processor, memory, and storage devices are operational before transferring control to the next stage.

Once hardware initialization completes successfully, the firmware searches for a bootable device. That device usually contains the bootloader responsible for loading the operating system itself.

Bootloader and OS Loading

After locating a valid boot device, firmware transfers control to the system bootloader. The bootloader is a small program designed specifically to locate and load the operating system kernel from storage.

According to GeeksforGeeks, booting is the process that starts when a computer is powered on and loads the operating system from secondary storage into RAM so it can manage hardware and software operations.

This step explains the significance of the bootloader. The OS cannot execute directly from disk storage. It must first be placed into main memory so the processor can access instructions rapidly.

During this phase the bootloader identifies the system kernel file, loads it into RAM, and prepares the parameters required for startup. Configuration information such as hardware settings and startup options may also be passed to the kernel.

The process remains consistent across platforms. The windows operating system uses a boot manager that loads the Windows kernel. Linux systems rely on bootloaders such as GRUB, which performs the same task for the Linux kernel.

Once the kernel enters memory and begins execution, the computer transitions from firmware control to the main operating system environment.

Step 2 — Kernel Initialization (The Brain of the OS)

After the bootloader finishes its job, the system kernel begins executing. This stage marks a major turning point in how an operating system works, since the kernel now assumes full control over hardware resources.

The kernel forms the core component responsible for managing CPU scheduling, memory allocation, and hardware communication. Every modern OS relies on this layer to maintain stability and coordinate system activity.

These responsibilities define the operating system kernel as the central control structure inside the system. All applications and services ultimately rely on it to access hardware safely.

How the Kernel Loads into Memory

Kernel execution begins immediately after the bootloader loads it into memory. At this moment the processor starts running the kernel’s initialization routines.

Based on Baeldung, the boot loader’s primary job is to locate the operating system kernel on disk, load it into memory, and execute it with the required parameters.

Once the kernel starts running, it begins setting up essential subsystems. Memory management structures are created, device drivers begin initializing, and interrupt handling mechanisms become active.

These steps illustrate how operating systems work internally. Instead of running applications immediately, the system first establishes a controlled environment where processes can execute safely.

Many responsibilities occur during this phase. The kernel identifies available hardware devices, initializes low-level drivers, and prepares scheduling systems that will later distribute processor time among running programs.

These initialization routines are fundamental to os resource management. Without them, multiple programs would compete for hardware access and cause system instability.

The kernel also prepares the internal structures that define kernel in operating system design. These structures track running processes, memory allocation, and device activity.

Modern systems such as the microsoft windows operating system and Linux implement similar initialization stages, although their internal implementations differ.

Kernel Mode vs User Mode

A key principle of operating system architecture appears during kernel initialization: the separation between kernel mode and user mode.

Kernel mode provides full access to hardware and system memory. Only the OS kernel and trusted system components operate within this privileged environment.

User mode operates under strict restrictions. Applications run in this environment to prevent them from directly accessing hardware or critical memory regions.

This separation protects system stability. If an application crashes or attempts invalid operations, the failure remains isolated within user mode rather than affecting the entire system.

Privilege rings within modern processors enforce this structure. The kernel operates at the highest privilege level, while applications run at lower privilege levels.

These protections illustrate how an OS functions as both a resource manager and a stability mechanism. The kernel controls hardware access while ensuring that applications cannot interfere with critical system operations.

Once these protections and core services are established, the operating system becomes ready to begin managing processes and executing applications.

Step 3 — Process Management and CPU Scheduling

Once the kernel finishes initialization, the system becomes ready to execute programs. Applications can launch, background services begin running, and the processor starts handling multiple tasks simultaneously. This stage reveals another critical aspect of how an operating system works.

A computer rarely runs a single program at a time. Web browsers, system services, messaging tools, and background utilities may all operate concurrently. Managing this activity falls under process management in operating system design.

Each running program becomes a process. The OS tracks these processes using internal data structures that store execution state, memory usage, and priority information. These structures allow the system to pause one task, switch to another, and resume execution later without losing progress.

This behavior defines a multitasking operating system. The system creates the illusion that many programs run at the same time even though the processor executes only one instruction stream at any given moment.

Efficient os resource management ensures that processor time is distributed fairly across active tasks. Without scheduling control, a single application could monopolize the CPU and make the system unresponsive.

The mechanism responsible for this coordination is the scheduler.

How the Scheduler Manages Multiple Tasks

The scheduler determines which process receives CPU time and for how long. It evaluates active processes, assigns time slices, and performs context switches that allow the processor to move between tasks.

This mechanism plays a central role in how OS works during everyday computing. Each program receives a small execution window called a time slice. When that slice expires, the scheduler pauses the process and selects another task waiting in the queue.

Time slicing allows dozens of programs to operate smoothly even on a single processor core. The rapid switching happens so quickly that users perceive all tasks as running simultaneously.

Context switching is another essential operation in this stage. When the system pauses a process, it saves the current processor state including registers, program counters, and memory references. Later, the scheduler restores this state so the program continues from the exact point where it stopped.

This coordination highlights core operating system functions. The OS balances responsiveness, fairness, and efficiency while distributing limited CPU resources among many competing tasks.

Different workloads require different scheduling strategies. Systems designed for servers may prioritize throughput, while desktop systems emphasize responsiveness to user input.

CPU Scheduling Algorithms

Operating systems implement several algorithms to determine how processes receive CPU time. These algorithms influence system responsiveness and overall performance.

| Algorithm | How It Works | Best Use Case |

|---|---|---|

| FCFS | First come first served | Simple workloads |

| Round Robin | Time slicing | Multitasking |

| Priority | Based on importance | Critical tasks |

First-Come First-Served scheduling executes tasks in the order they arrive. The method is simple but can cause long wait times if large processes occupy the processor.

Round Robin scheduling improves responsiveness by assigning equal time slices to processes. After a slice ends, the scheduler rotates to the next process in the queue. This strategy works well in multitasking environments.

Priority scheduling assigns CPU time based on process importance. Critical system services may receive higher priority while background tasks receive less frequent execution.

These strategies demonstrate another dimension of how an operating system works. The OS constantly evaluates workloads, balancing performance and responsiveness across the entire system.

Step 4 — Memory Management and Virtual Memory

Every running process requires memory to store instructions and active data. Managing this memory efficiently forms another core responsibility of the OS.

The memory management in operating system subsystem controls how RAM is allocated, tracked, and protected during program execution. Without structured management, programs could overwrite each other’s data and cause system instability.

When an application starts, the OS assigns a dedicated memory space. This isolation ensures that each program operates independently without interfering with others.

Effective ram management also determines how much memory each process receives. Systems with limited RAM must allocate resources carefully to prevent performance issues.

Virtual memory introduces another layer of flexibility. Instead of relying solely on physical RAM, the OS can extend available memory using storage devices.

The concept plays an important role in how OS works when multiple programs run simultaneously. When RAM becomes full, the OS temporarily moves inactive memory pages to a disk-based area known as the swap space or page file.

This process, known as paging, allows the system to maintain application stability even under heavy workloads. Active processes remain in RAM while less frequently used data moves to secondary storage.

Although accessing disk storage is slower than RAM, virtual memory prevents applications from failing due to memory shortages.

These techniques demonstrate how operating system architecture balances efficiency and reliability. Memory management ensures that programs receive the resources they need without compromising system stability.

Step 5 — File System and Storage Handling

Persistent data storage forms another major responsibility of the OS. Applications constantly read files, save documents, and access system resources stored on disks or solid-state drives.

The file system in operating system design organizes this data into structured directories and files. Without this structure, locating or modifying information would become extremely difficult.

Every file operation follows a structured flow. When an application requests data, the OS translates that request into storage instructions. The storage controller retrieves the required blocks, and the OS returns the data to the requesting program.

This process illustrates another part of how operating systems work during everyday computing. The OS acts as an intermediary between software and storage hardware.

Storage coordination also includes permissions and access control. Users and applications receive defined privileges that determine which files they can read or modify.

Modern computer operating systems use different file system technologies depending on their design goals. Windows commonly uses NTFS, while Linux systems rely on file systems such as ext4.

Although the implementations differ, the core concept remains the same. The OS manages storage access, organizes data structures, and ensures consistent file operations across the system.

These storage mechanisms support efficient storage management, allowing applications to store and retrieve information without directly interacting with hardware devices.

Step 6 — Device Drivers and Hardware Communication

Hardware devices such as keyboards, printers, graphics cards, and network adapters require specialized software to interact with the operating system. This interaction occurs through device drivers.

Drivers provide an abstraction layer that allows applications to communicate with hardware without needing to understand device-specific details. This abstraction plays a central role in device management operating system design.

Each hardware device exposes capabilities through driver interfaces. The OS communicates with these drivers using structured commands that translate software requests into hardware operations.

These interactions often rely on system call in operating system mechanisms. Applications issue system calls when they need to perform actions that require kernel access, such as reading from storage or sending network data.

Drivers interpret these requests and forward them to the appropriate hardware components.

This architecture demonstrates how an OS functions as the communication hub between software and physical devices. Applications operate at a high level, while the OS manages low-level hardware interaction.

A simple keyboard input illustrates the process clearly. When a user presses a key, the keyboard hardware sends a signal to the system. The keyboard driver interprets the signal, and the OS forwards the resulting character to the active application.

This layered approach ensures compatibility across diverse hardware devices while maintaining system stability and security.

Through device drivers, the operating system maintains consistent communication with hardware components while protecting the core system from direct application access.

Real-World Walkthrough — How an Operating System Works When You Open an App

A practical example helps illustrate how an operating system works during normal computer use. Consider a simple action such as clicking an application icon on the desktop. The action looks trivial, yet several coordinated steps occur within milliseconds.

When the user clicks an application, the OS receives an input signal from the mouse driver. The system interprets the click event and identifies which program the user requested to open. At this moment the operating system begins preparing the environment required for that application to run.

From that point forward, the execution sequence typically follows several steps:

- The system locates the application file stored on disk.

- The OS verifies file permissions and confirms the user is allowed to run the program.

- The executable file begins loading into memory.

- A new process entry is created inside the process table.

- The scheduler prepares CPU time for the new process.

- Memory space is allocated for the program code and working data.

Each step occurs extremely quickly, usually within a fraction of a second.

This sequence demonstrates how OS works during everyday computer activity. Input arrives from hardware, the OS interprets the request, resources are allocated, and the application begins execution within a controlled environment.

Windows vs Linux Execution Flow

The execution flow follows similar principles in both the windows operating system and the linux operating system, although internal implementations differ.

In Windows, clicking an application triggers the Windows loader, which prepares the executable file and loads required libraries into memory. The system then registers the new process and schedules it for CPU execution.

Linux systems follow a comparable path. The kernel loads the executable file, creates a process structure, and assigns memory resources before scheduling the process.

Both systems rely on structured resource allocation, process scheduling, and memory management to ensure the application runs smoothly without disrupting other processes.

Conclusion

How an operating system works becomes clearer when examining the sequence that transforms powered hardware into a functioning computer system.

The process begins with hardware initialization and boot loading. Firmware prepares the hardware environment, while the bootloader transfers control to the kernel. Once the kernel starts running, it establishes the core system environment.

From that point forward the operating system manages processor scheduling, memory allocation, storage access, and hardware communication. Applications operate within controlled environments where system resources remain organized and protected.

These mechanisms form the foundation of reliable computing. Process isolation prevents applications from interfering with one another. Memory management ensures efficient use of RAM. Hardware drivers allow diverse devices to function under a unified system interface.

Learning how an operating system works helps reveal the invisible coordination happening behind every mouse click, file operation, and application launch.

This knowledge also strengthens technical understanding for developers, IT professionals, and anyone interested in system-level computing. The operating system remains the central layer that transforms raw hardware into a usable digital environment.

FAQs About How an Operating System Works

What is the main function of an operating system?

The main role of an operating system is to manage hardware resources and provide services for software applications. It coordinates processor scheduling, memory usage, storage access, and device communication so programs can run efficiently.

How does an operating system manage memory?

The operating system tracks how RAM is used and assigns memory spaces to running programs. It also uses techniques like virtual memory and paging to extend available memory when physical RAM becomes limited.

What happens if the operating system fails to boot?

If the operating system cannot boot, the computer cannot load the kernel or initialize system services. The machine may display firmware errors, recovery options, or remain stuck during the startup process.

Is Windows different from Linux in how the OS works?

Both systems follow similar principles of how an operating system works, including process management, memory control, and hardware interaction. Differences mainly appear in architecture design, kernel structure, and system tools.

Can a computer run without an operating system?

A computer technically can power on without an operating system, but it cannot run normal applications or manage hardware resources effectively. The OS provides the environment required for software execution.